Following the Sora announcement, OpenAI unveiled another groundbreaking offering, the latest flagship model ChatGPT-4o.

The 'o' stands for 'omni,' signifying its all-encompassing ability; as an omnimodel, it recognizes text, voice, images, and videos and generates mixed outputs composed of text, voice, and images. Astonishingly, this powerful upgrade is available for free! But does this make the paid version redundant?

OpenAI's CTO Mira Murati introduces ChatGPT-4o. (Source: OpenAI)

OpenAI's CTO Mira Murati introduces ChatGPT-4o. (Source: OpenAI)

Let's dive into the details of this latest ChatGPT model, its features and advantages, use cases, and the differences between the free and paid versions.

Features and Advantages of ChatGPT-4o

・Robust Voice and Image Recognition Capabilities:

ChatGPT-4o boasts powerful voice and image recognition functionalities, capable of directly handling voice commands and image analysis. The ChatGPT app can now serve as a voice assistant for smartphones, using the camera and microphone to perceive surrounding environments.

・Faster Speed:

Compared to previous models, ChatGPT-4o has significantly improved processing speed. Its voice input response time can be as short as 232 milliseconds, with an average of 320 milliseconds, nearing human conversational response times for more natural interactions.

・Emotional Expression:

The voice generation technology of ChatGPT-4o can express emotions, adjust tone, and change speaking pace, such as laughing during a conversation, singing, or speaking dramatically. Five different voice options enhance the naturalness and authenticity of conversations, reminiscent of the movie "Her."

・Multimodal Integration:

ChatGPT4o can process voice commands and combine image information for comprehensive multimodal analysis, providing richer feedback. For instance, when a user takes a picture and asks for information about something, it can provide a detailed explanation through voice.

Can ChatGPT-4o become a cloud-based companion?

Can ChatGPT-4o become a cloud-based companion?

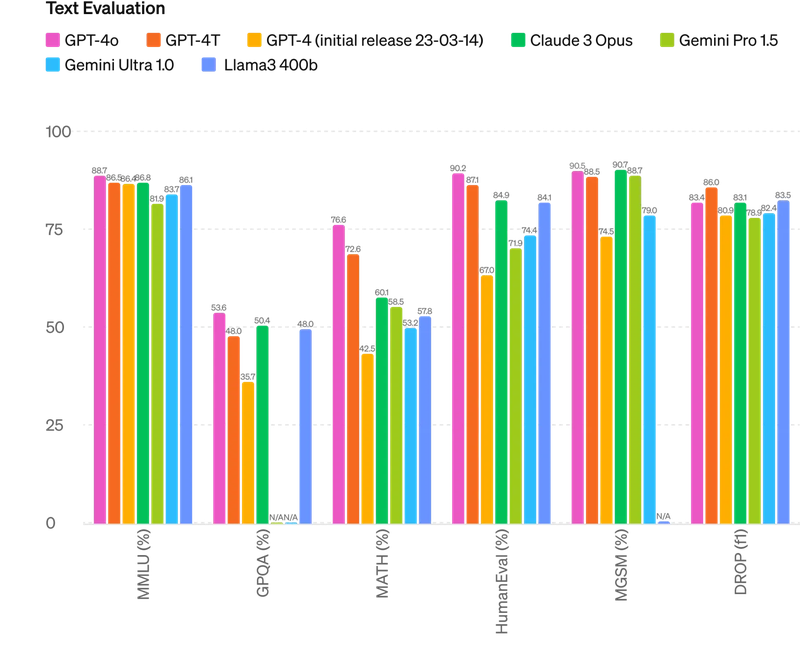

Comparison of ChatGPT-4o with Other Models

ChatGPT-4o has demonstrated superior performance in various tests compared with its predecessors and competing models. It answers complex knowledge and mathematical questions, accurately identifies and translates voice content, and performs exceptionally well in multilingual and visual recognition, making it one of the most powerful language models available, leading in adaptability and accuracy.

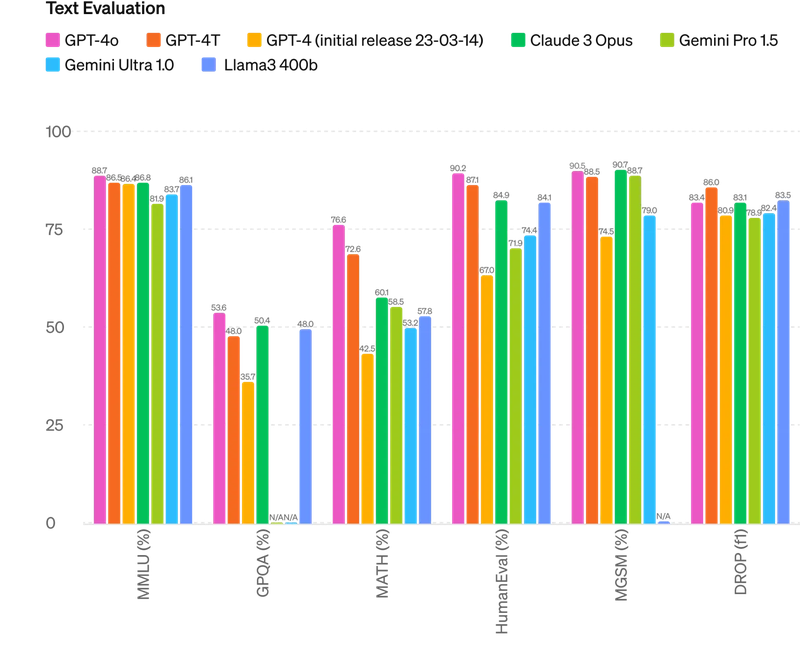

・Textual Reasoning:

In multiple reasoning tests, ChatGPT-4o's overall performance is well-balanced and consistently leads other models.

(Source: OpenAI)

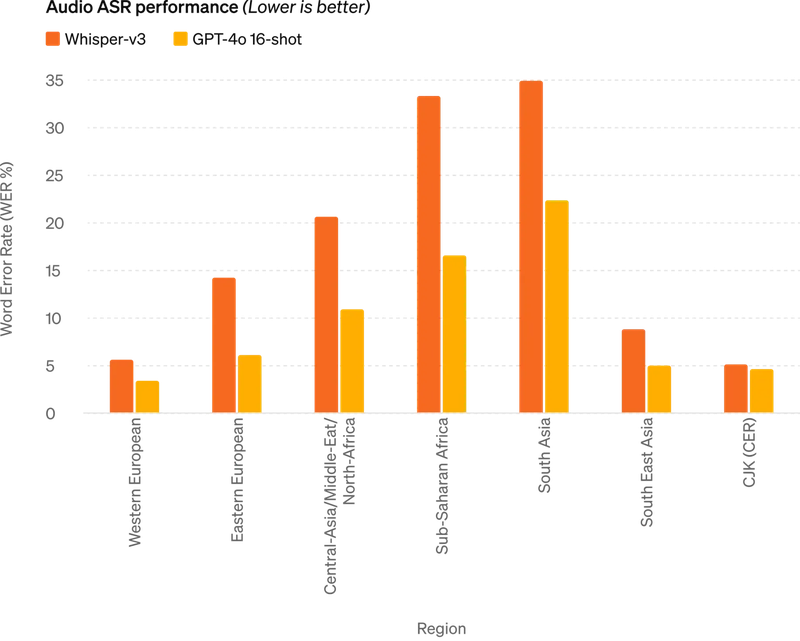

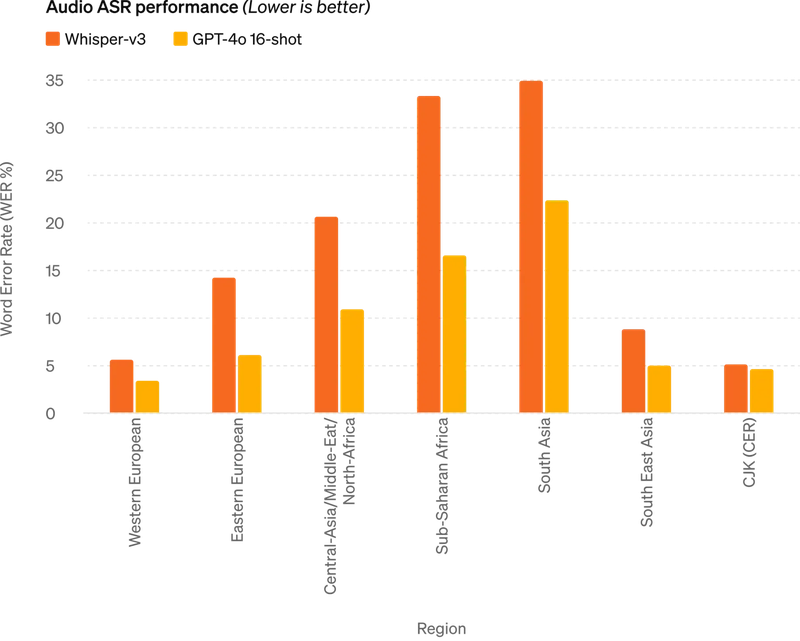

・Voice Recognition:

In voice recognition, ChatGPT-4o also excels, with a significantly lower Word Error Rate (WER) than Whisper-v3. Notably, it has shown considerable improvement in regions with fewer language resources, such as Asia and Africa.

(Source: OpenAI)

(Source: OpenAI)

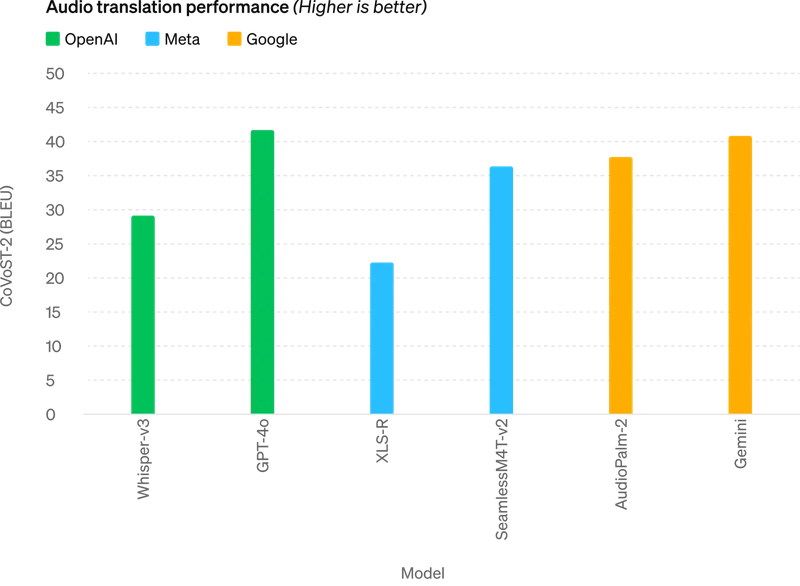

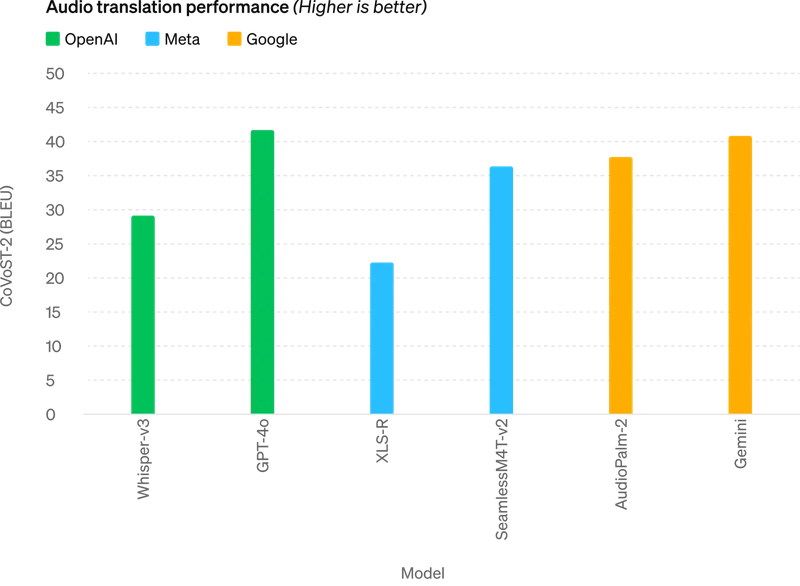

・Voice Translation:

Compared to models from Meta and Google, ChatGPT-4o stands out in voice translation. Its bilingual dialogue is smooth and fluent, as seen in their live translation demo.

(Source: OpenAI)

(Source: OpenAI)

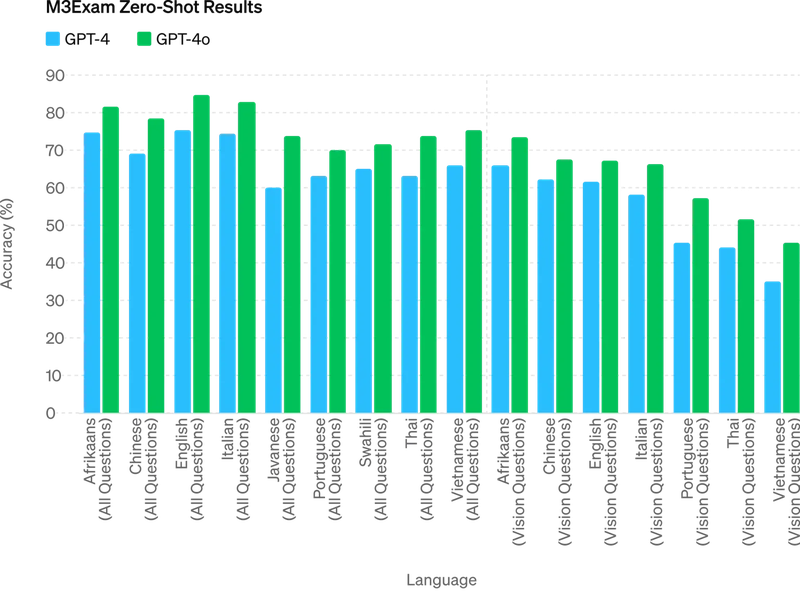

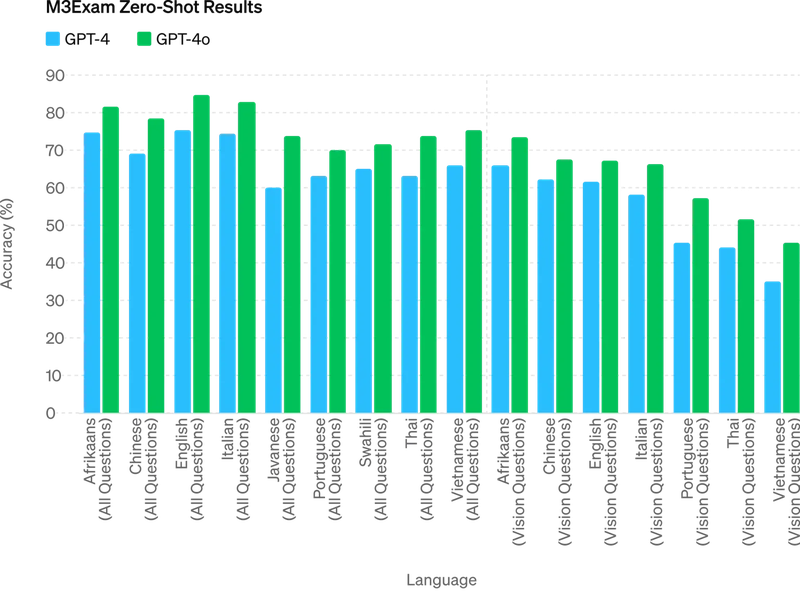

・M3Exam Testing:

The M3Exam test includes a diverse set of exam questions from different countries, sometimes with charts and diagrams. In this test, GPT-4o performs better in all languages compared with GPT-4.

(Source: OpenAI)

(Source: OpenAI)

Practical Applications of ChatGPT-4o

Beyond the live demos where ChatGPT-4o engages in natural and lively conversations with three hosts, its potential is wide-ranging:

・Real-time Multilingual Interpretation:

Multilingual translation is one of the most promising functions, even plummeting Duolingo's stock price during the demo. In the first video, "Point and Learn Spanish with ChatGPT-4o", we see a demonstration of learning Spanish vocabulary by taking pictures of objects, with it accurately identifying objects and seamlessly switching between English and Spanish to describe items on a table.

Seamless English-Spanish switching. (Source: OpenAI)

Seamless English-Spanish switching. (Source: OpenAI)

In the second video, "Realtime Translation with ChatGPT-4o", it acts as a Spanish-English interpreter, achieving realtime, consecutive voice translation.

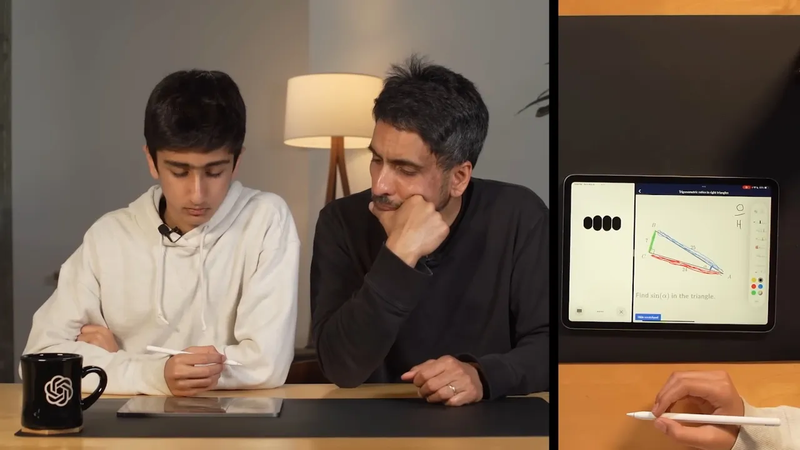

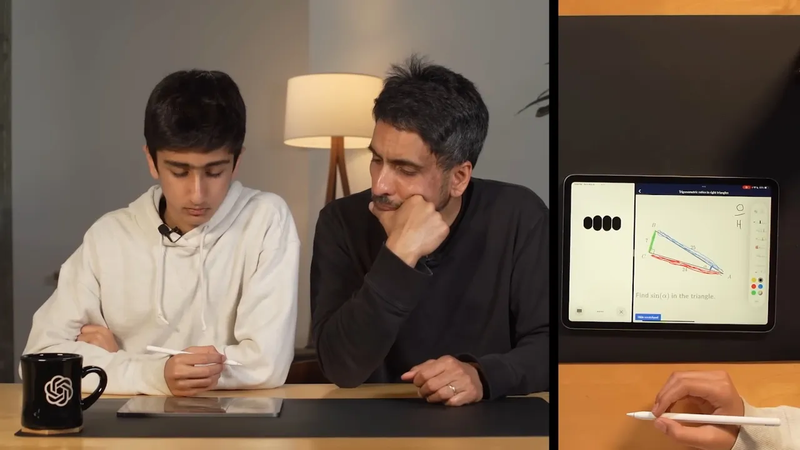

・Solving Math Problems:

Whether it's handwritten math problems or geometry diagrams on an exam paper, ChatGPT-4o provides the correct answers and teaches you step-by-step how to solve them, essentially serving as a private tutor.

Like having a free tutor. (Source: OpenAI)

Like having a free tutor. (Source: OpenAI)

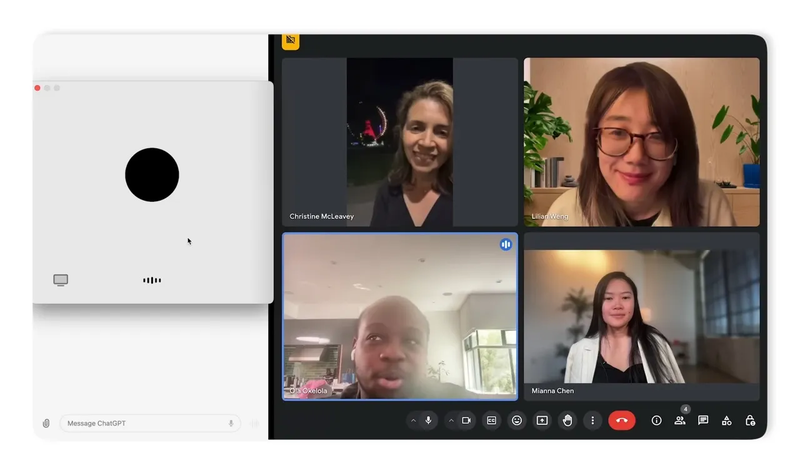

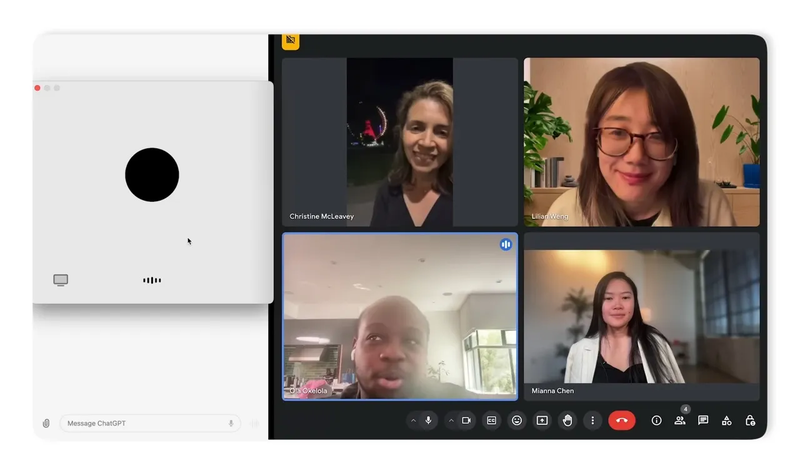

・Telling Jokes, Participating in Video Conferences, and Even Two AIs Conversing:

While some demos may seem frivolous, ChatGPT-4o emphasizes humanity beyond functionality. It achieves natural conversational speed, and its voice technology eliminates the stiffness of talking to a machine. In the near future, it could become your AI companion. In demos, ChatGPT-4o can take on a sarcastic persona, tell dad jokes, participate in video conferences, and even compliment your cute dog. Most impressively, it can facilitate a discussion between two AIs conversing on their own.

Could ChatGPT-4o take over my meetings in the future? (Source: OpenAI)

Could ChatGPT-4o take over my meetings in the future? (Source: OpenAI)

ChatGPT-4o: Differences Between Free and Paid Versions

OpenAI has announced that GPT-4o will gradually become available to free users, while ChatGPT Plus paid users already have access. However, there are differences in usage rights between the free and paid versions. Let's take a look at the summary below:

| Feature |

Free Version |

Paid Version (Plus) |

| Essentials |

|

|

| Messages & Interactions |

Unlimited |

Unlimited |

| Chat History |

Unlimited |

Unlimited |

| Supported Devices |

Available on web, iOS, Android, and Mac desktop |

Available on web, iOS, Android, and Mac desktop |

| Model Quality |

|

|

| Access to GPT-3.5 |

Unlimited |

Unlimited |

| Access to GPT-4o |

Limited |

Up to 5x Free Usage |

| Access to GPT-4 |

Not Available |

Standard |

| Response Speed |

Limited on bandwidth & availability |

Fast |

| Context Window |

8K tokens |

32K tokens |

| Regular Quality and Speed Updates |

Yes |

Yes |

| Features |

|

|

| Voice Recognition |

Yes |

Yes |

| Memory |

Yes |

Yes |

| Browser Access |

Limited |

Yes |

| Advanced Data Analysis |

Limited |

Yes |

| Visual Functions |

Limited |

Yes |

| File Upload |

Limited |

Yes |

| Exploring and Using GPTs |

Limited |

Yes |

| Creating and Sharing GPTs |

Not Available |

Yes |

| Image Generation |

Not Available |

Yes |

| Privacy |

|

|

| Privacy Options |

Opt-out available |

Opt-out available |

*Context Window: Refers to the amount of text the model can consider in one processing instance. Simply put, it's the range of words or characters the model can "remember" at one time. In chat applications, this window determines how much of the previous conversation the model can reference to generate a response.

As OpenAI's latest flagship model, GPT-4o builds upon the foundation of GPT-4, maintaining its powerful multimodal processing capabilities while enhancing efficiency and reducing costs. This makes GPT-4o, whether free or paid, capable of meeting a wide range of user needs.

While there is high anticipation for GPT-5 in the market, the introduction of the Omnimodel is still of great significance at this stage of AI development. It expands AI's application scope and lays the foundation for more diversified future developments.

OpenAI's CTO Mira Murati introduces ChatGPT-4o. (Source: OpenAI)

OpenAI's CTO Mira Murati introduces ChatGPT-4o. (Source: OpenAI) Can ChatGPT-4o become a cloud-based companion?

Can ChatGPT-4o become a cloud-based companion?

(Source: OpenAI)

(Source: OpenAI) (Source: OpenAI)

(Source: OpenAI) (Source: OpenAI)

(Source: OpenAI) Seamless English-Spanish switching. (Source: OpenAI)

Seamless English-Spanish switching. (Source: OpenAI) Like having a free tutor. (Source: OpenAI)

Like having a free tutor. (Source: OpenAI) Could ChatGPT-4o take over my meetings in the future? (Source: OpenAI)

Could ChatGPT-4o take over my meetings in the future? (Source: OpenAI)